The First Real Breakthrough in Data Storage

Learn about INFINIDAT’s use of ‘tries,’ a data structure that transforms how data is persisted on disk and helps make the InfiniBox what it is today. Transcript below:

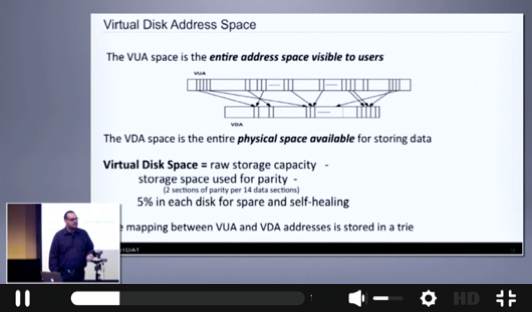

[Brian Carmody, CTO, INFINIDAT] I'm going to now share with you what I consider to be the first, real breakthrough that makes InfiniBox possible. And it is the way that we actually do mapping between these two. We store the mapping between the virtual space that's visible to users and the physical space for storing data in a trie data structure. So it's said that behind every great product is a great data structure. For us it is a trie.

Despite what Yoda says, there are many tries in the InfiniBox world.

[Audience Member] For a poor, old chemistry major, what's a trie?

[Brian Carmody] A trie is an ordered tree data structure. But it has a couple of fascinating little properties that make it exceedingly good for storage virtualization. It's what makes virtualization at this scale possible. What's special about a trie as opposed to other types of trees for storing data is that the nodes themselves do not store the keys. Rather, it's the specific traversal from null down to the key, that has encoded in it, the key, which for us is a pointer to the location.

[Audience Member] So it's not the destination, it's the journey?

[Brian Carmody] It's the journey, yes. So tries are inherently compact. They also have other properties. They have locality of reference built into them. So they compute exceedingly well and cache exceedingly well on modern CPUs with out-of-order execution.

But there's also something about our implementation, which makes it super space efficient, as well. Each entry in the trie is a pointer, from an address space visible to users, and a physical location on disk.

Notice in this example that the sixth rectangle is much bigger than all the others. But it's a single pointer to a location on disk. Tries allow us to have an infinitely variable block size or section size between this mapping. And what this means is that when you write a single file to our file system, regardless of its size, it’s exactly one entry in the trie that we have to create. Similarly, if you are writing to a block volume, and you write a huge backup job, like a huge sequential or whatever -- In principle, regardless of how big it is, it's only a single entry that we have to make. So we exploit sequentialness to keep the trie compact.

So space compactness is good because it allows us to save memory, it keeps the data structure small. But what really matters, and the real game changer for us, is the performance.

Tries are unicorn data structures. They are 0 of 1 for both inserts and searches. And think about a virtualized storage system. A search is a read. Give me this data, at this address, where is it located. That's a search in the trie to find where the data is. An insert is write. Here is a piece of data, go store it somewhere. That mapping between the address that it was written to and where we actually stored it, is a write.

So, stuff like this as technologists, we usually think about as undergraduates. Maybe for doing a coding interview they'll make you do a trie and then you never think about them. But the performance of core data structures, when you're thinking about exabytes scale computing, the performance of court data structures is critically important. And the key takeaway with this is, from the first block of data that's written into this, up to the theoretical limit, which is two to the 64 files for us in the file system, and its effectively infinite within provisioning for the block, the performance, the look-up time of the core data structure is absolutely flat.